Many companies are currently trialling AI tools. Writing texts, summarising emails, developing ideas, analysing data – much of this already works surprisingly well. Nevertheless, in practice, one is often left with the feeling that: AI can do a lot, but it doesn’t know our company.

Although AI models possess a vast amount of general knowledge, they do not automatically understand a company’s specific characteristics, such as internal processes, customer expectations, exceptions, past experience, quality standards or previous decisions.

It is primarily the people within the company who possess this knowledge. And it is often precisely this knowledge that explains why experienced employees make better decisions than an AI system.

The real advantage of experienced staff: context

Those who have worked in a company for a long time often know more than is written in any manual.

For example:

- Which customers react particularly sensitively

- Which phrasing works well externally

- When a case is better escalated

- What internal agreements exist

- Which mistakes in the past proved costly

- Which exceptions are permitted in the process

- Where official rules and actual practice diverge

This knowledge is extremely valuable. At the same time, it is often hard to pin down. It resides in people’s minds, emails, meetings, chat histories or individual experiences.

For new employees, this is tedious. For stand-ins, it is risky. For managers, it is difficult to manage. And for AI, it is simply unusable unless it is available in a suitable form.

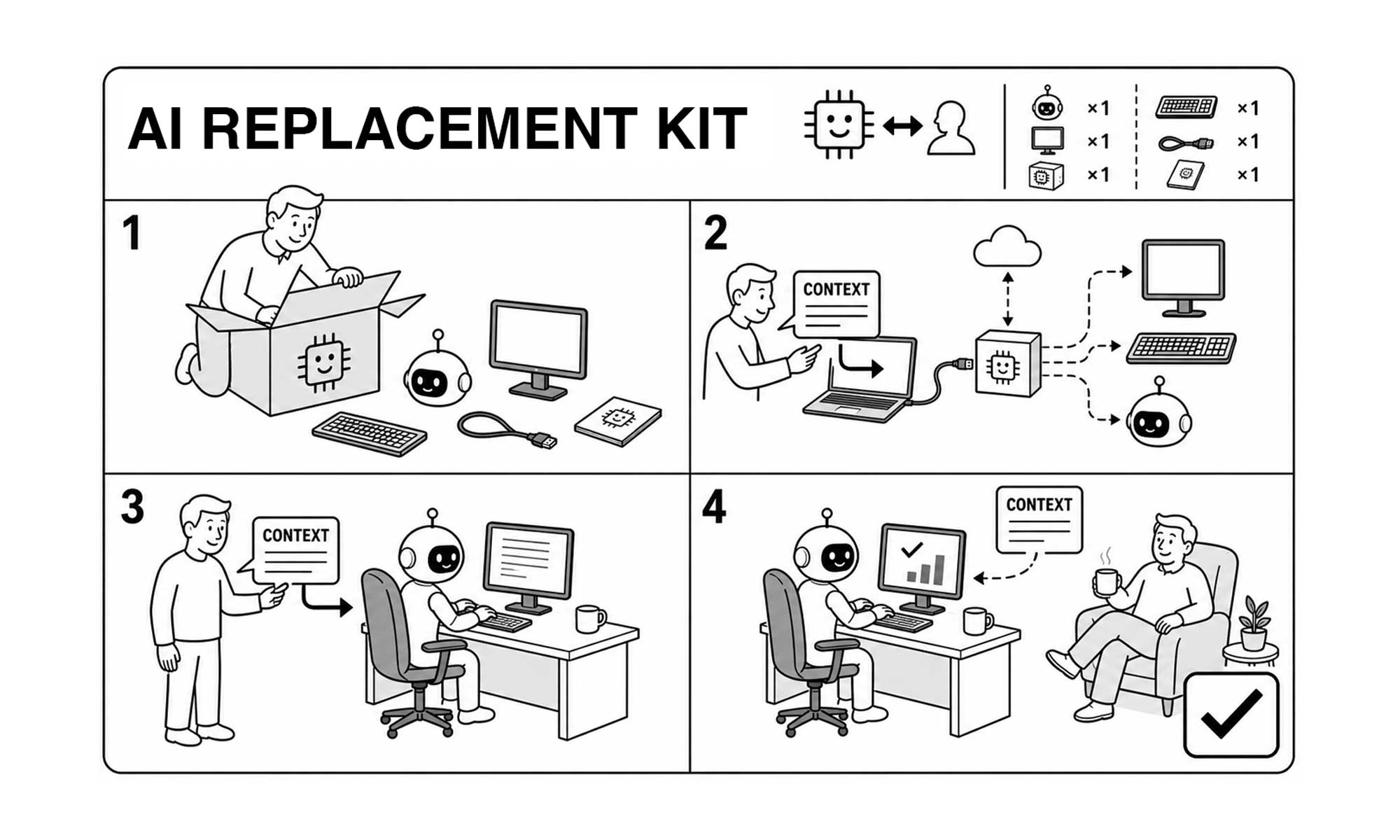

AI needs not just tasks, but context

Many AI applications start with prompts such as:

“Write a response to this customer complaint.”

The result usually sounds decent. But whether it really fits depends heavily on the context the AI is given.

Without context, a generic response is produced.

With context, it can become a helpful support.

A simple difference:

Without corporate knowledge

“We apologise for the inconvenience and will deal with your enquiry as soon as possible.”

With company knowledge:

“If a customer writes for the second time regarding the same delayed delivery, the case should no longer be handled with a standardised response. First check the order history, previous correspondence and the agreed delivery time. Then either provide a personalised response or escalate the matter to the service team.”

The second example isn’t simply a better prompt. It’s better business context. And it is precisely this context that determines whether AI merely generates nice-sounding text or actually makes work easier.

Why companies should document their knowledge better

To many, documentation initially sounds like bureaucracy. Like long PDFs, outdated wikis and filing systems where nobody can find anything. It shouldn’t be like that.

Good documentation doesn’t mean writing everything down, but rather recording what people need to make good decisions.

This can help companies in many ways:

- New employees get up to speed with their tasks more quickly

- Stand-ins can take over with greater confidence

- Processes become more consistent

- Mistakes are repeated less often

- Knowledge is retained, even if experienced staff are absent or leave the company

- AI systems can be used much more effectively

The most important point: documented knowledge doesn’t just improve AI. It also improves the organisation itself. Because when you write things down, it often becomes clear where processes are unclear. Where there are no consistent rules. Where everyone works differently. Or where decisions depend on a few key individuals.

What exactly should be documented?

Not every detail is important. Knowledge that helps with decision-making is particularly useful.

1. Decision-making rules

When is a case approved?

When is it rejected?

When is it escalated?

Who is authorised to decide?

These points in particular are valuable for AI and staff because they provide guidance.

2. Typical exceptions

Many processes work well under normal circumstances. Things get tricky with special cases.

That is why the following should be documented:

- Which exceptions occur frequently?

- How can they be identified?

- What should be done in such cases?

- Who needs to be involved?

3. Good and bad examples

Examples are often more helpful than abstract rules. A well-written customer service message, a poorly resolved complaint or a successful quote demonstrate very clearly what quality means. Such examples are particularly useful for AI because they clarify style, structure and expectations.

4. Quality criteria

What constitutes a good result?

- factually correct

- friendly but professional

- legally uncontroversial

- fully documented

- understandable to customers

- internally traceable

Such criteria help both people and AI alike.

5. Escalation points

AI should not decide everything. That is why clear boundaries are needed.

When must a human take over?

When should a manager be involved?

When is a legal review required?

When should an automated response not be sent?

This is particularly important for sensitive topics such as HR, complaints, contracts, finance or legal matters.

Data protection is part of the process from the outset

When companies make internal knowledge available for AI, they must consider data protection and confidentiality. This is because valuable context in particular often contains sensitive information: customer data, employee data, contract details, prices, complaints, internal assessments or trade secrets.

Important: Not everything that is known internally should be fed unfiltered into a knowledge database or an AI tool.

As specific as necessary, as anonymous as possible

Good:

“If a customer contacts us several times about the same problem, the case must be prioritised and reviewed personally.”

To be avoided at all costs:

“Customer Max Müller from Berlin always complains aggressively when his delivery is late.”

The first example contains useful process knowledge. The second contains unnecessary personal and judgemental information.

Data protection is not an obstacle here. On the contrary: it forces us to make a clear distinction between relevant business knowledge and sensitive individual case data.

This not only makes AI projects more secure, but often better too.

A simple rule for getting started

Many companies make the mistake of starting too big. They want to capture the entire company’s knowledge straight away or introduce a large AI platform.

It makes more sense to start small and specific.

For example, with a process that has these characteristics:

- It occurs frequently

- It takes a lot of time

- There are recurring questions

- Errors or rework occur regularly

- It depends heavily on the knowledge of individual people

- The data set is manageable

Suitable areas could include, for example, customer service, sales, quotation processes, internal IT, procurement or HR.

Then you shouldn’t ask first:

“Which AI tool do we need?”

But rather:

“What knowledge do people need today to carry out this process effectively?”

Only once this question has been answered can AI provide meaningful support.

A practical five-step guide

A simple start could look like this:

- Select a specific process

Not “AI across the whole company”, but a clear use case. For example: responding to customer enquiries, preparing quotations, sorting internal support requests or pre-screening job applications.

- Gather practical knowledge

Talk to the people who know the process well. Questions might include: What do you check first?

What mistakes happen frequently?

When do you exercise caution?

Which cases do you escalate?

What information do you often find yourself lacking?

What distinguishes a good case from a bad one? - Structure knowledge

The answers should not result in lengthy manuals, but rather short, usable building blocks:

Process overview

Decision rules

Examples

Checklists

Escalation rules

Quality criteria - Check data protection

Before using AI, the following should be clear:

Which data may be used?

Which data must be anonymised?

Who is permitted to access what?

Which content must not be transferred to external systems?

Where is human review mandatory? - Testing AI in a controlled manner

Only now is AI integrated. Not fully automatically straight away, but in a supportive role. For example, as a drafting aid, summary, proofreading assistant or source of ideas. The results should be regularly reviewed and improved by experts.

What companies should avoid

A few mistakes occur particularly frequently.

The first mistake: introducing AI without an understanding of the process. This produces nice individual results, but no real benefit.

The second mistake: documenting too much and in an unstructured way. A huge wiki is of no use to anyone if it is not maintained.

The third mistake: only checking data protection at the end. This often means having to make changes retrospectively or halt projects.

The fourth mistake: adopting AI results without checking them. Clear oversight is essential, particularly for sensitive or business-critical issues.

And the fifth mistake: failing to involve experienced staff. Without their knowledge, AI remains superficial.

AI improves when companies better understand their own knowledge

AI does not replace good corporate knowledge. It relies on this knowledge.

The real lever therefore lies not only in the tool, but in the groundwork: understanding processes, making experiential knowledge visible, collecting good examples, clarifying data protection and establishing clear rules.

That sounds less spectacular than the ‘AI revolution’. But in practice, it is often far more effective. Because companies that structure their knowledge well benefit twice over:

People work more clearly. And AI can provide more meaningful support.

The crucial question is therefore not:

How do we use AI as quickly as possible?

But rather:

What knowledge do we need so that people and AI can achieve better results together?

Anyone who answers this question seriously lays the foundation for genuine digital improvement.

If you want to find out which processes in your company are suitable for the meaningful use of AI, it is worth taking a structured look at knowledge, data, risks and benefits. Often, a clearly defined use case is enough to gather initial, reliable experience.